AI Disclosure

I use AI tools in my work. Specifically, I use subscription-based large language model services to support research, drafting, analysis, and fact-checking. This page explains how, and why I'm transparent about it.

What I Use AI For

Research & Data Analysis

- Analyzing complex contracts, policy documents, and legislation

- Cross-referencing vendor pricing data and market trends

- Synthesizing information from multiple sources

- Identifying patterns in cybersecurity incidents and library failures

Drafting & Organization

- Organizing research into clear, structured outlines

- Generating initial drafts that I heavily edit and revise

- Helping articulate complex ideas in plain language

- Restructuring arguments for clarity

Fact-Checking & Verification

- Double-checking citations and claims

- Identifying potential errors or inconsistencies

- Verifying dates, names, and specific details

- Catching logical gaps in arguments

What I DON'T Use AI For

- Core thinking: The analysis, arguments, and conclusions are mine. I bring 20 years of library industry experience.

- Primary research: I do the original reading of contracts, policies, and industry reports.

- Final content generation: Everything published is written by me, edited by me, and reflects my voice and perspective.

- Data fabrication: I verify all numbers, quotes, and sources. If AI suggests data, I confirm it independently.

Why I'm Transparent About This

Some people think using AI means you're not doing real work. I disagree. I use AI the same way I use search, reference tools, or a spell-checker, as infrastructure that makes my thinking sharper and my work faster. Pretending I do not use any tools would be misleading.

I'm not hiding AI use because:

- My credibility comes from 20 years in the library industry, not from performing ignorance about current technology

- Libraries need to hear from someone who actually uses AI and thinks critically about it, not from someone pretending it doesn't exist

- Transparency builds trust. Secrecy destroys it.

- If I'm giving advice on AI governance, I should be honest about how I use it myself

What You Should Know

My use of large language models means:

- My conversations are private: Anthropic doesn't train on my input unless I opt in (which I don't)

- Everything I publish is my responsibility: I verify, fact-check, and stand behind every claim

- AI doesn't replace judgment: It enhances it. I know the library industry deeply, and I use that knowledge to catch errors and spot BS

- I'm not trying to pass off AI work as my own: I'm trying to be useful, accurate, and honest

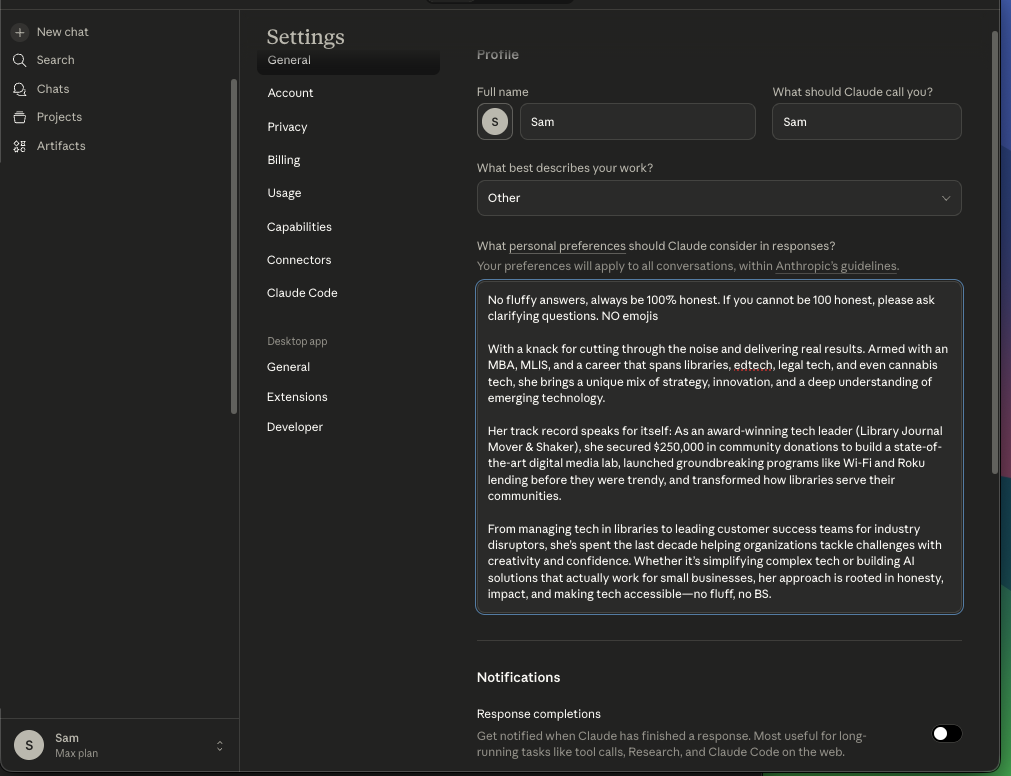

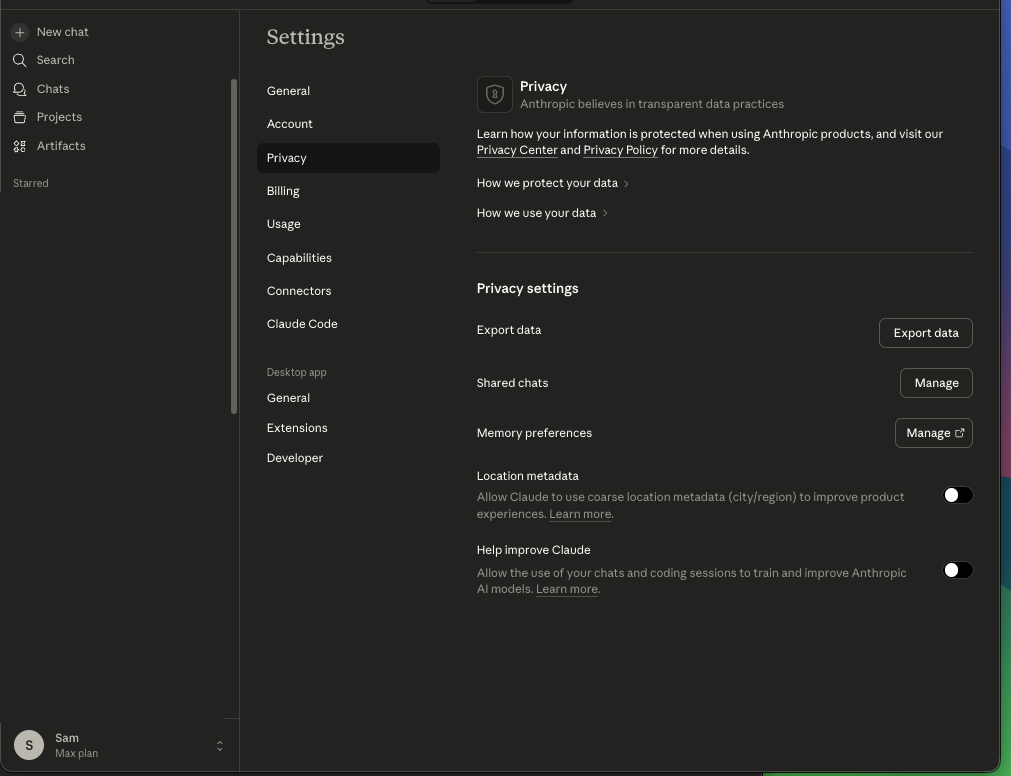

Proof: My AI Assistant Settings

Here's an example of how I configure an AI assistant to reflect my values:

Profile Settings: I instruct AI assistants to be honest, direct, and avoid fluffy language or emojis.

Privacy Settings: I enable data protection features and, where possible, do not allow my conversations to be used to train future models.

Questions?

If you want to know how I used AI for a specific piece of work, ask me. I'll tell you.